@kineticworks:

Since you have started on this question, hence I'm also interested to know what is the kind of sacrificial in quality when converted from RAW to JPEG. Here I have carried out a couple of experiments and reviewed the changes, they are not scientific review but rather on the basis on observation.

I started with a 16bits grey scale black on the left and white on the right as you can see below:

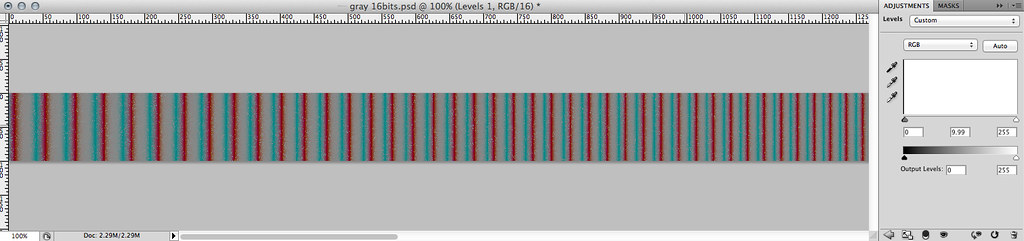

Now I converted it into 8bits in PS save it as PSD in lossless compression, reload it and then placed it as a separate layer into the same grayscale in 16bits. I did a difference between the 2 layers. On screen, you will not see anything much except everything is in BLACK, means visually they are the SAME. But when you put them under the histogram, and then use levels to adjust to extreme, you will see something like this

As you can see across the full grayscale spectrum, extreme level shows differences of cyan and red. Based on this we can observe changes but that's when the gamma is at extreme scale. Also you will notice the frequency of changes increase along from the black to white region. Having a lower frequency and larger band will means your eyes will more likely to notice the changes in the shadow area versus the highlight areas.

Below is a 100% crop in the shadow area.

It's the extreme shadow and changes are pretty uniform, hence while 16bits to 8bits conversion did indeed lose information, it's the least of your concern since the loss is pretty uniform.

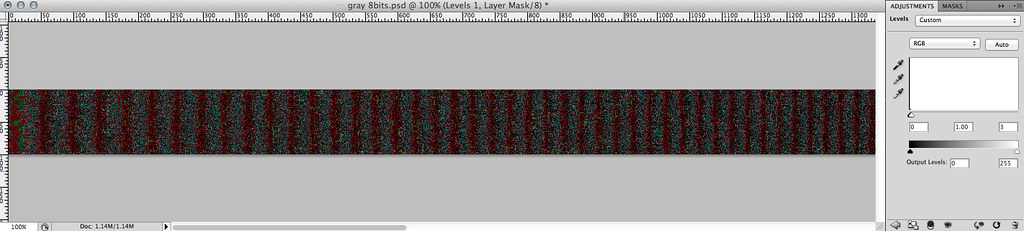

Now lets compare between a 8bits RAW and 8bits JPEG at the highest (12) quality that PS offers and what kind of loss are we experiencing. The loss due to the JPEG comparison is in similar fashion but even at this point you are observe more erratic behaviour, lets go into the 100% crop of the shadow area

Now at the extreme shadow, you see the quantization process gives very bad results after decoding. Also the banding basically stretch across each interval. But still I must say this is pretty good even it's not observable under normal circumstances. This is again an extreme gamma tweaking to reveal the inaccuracy of colour representation due to JPEG encoding.

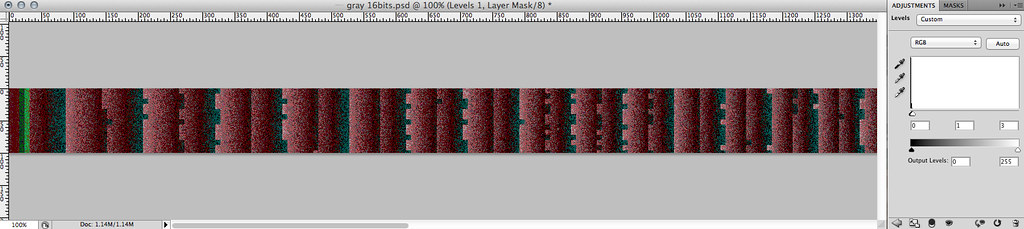

How about if we choose the medium encoding for JPEG ? I will go straight into the 100% crop as shown below

Now this is drastic right ? Not only is the banding bad looking, but compared to the highest quality, it's not uniform and also the quantization artifacts are very pronounced. But still I must say it doesn't look too obvious in normal circumstances.

Hence back to your issue, now that you mentioned your source is noisy and started with dark scenes, hence you might just wanna de-noise as much as possible for the dark regions so that it doesn't look so bad when encoded using JPEG. But if you keep your JPEG encoding to the highest level, I say it should be good enough for your client. Your client eyes are not histogram and also they should refrain from extreme post processing on the JPEG media since it is lossy in nature and every re-save will loss more information.

I hope this helps for you.