You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Archive: from the old newbie guide.

- Thread starter zoossh

- Start date

- Status

- Not open for further replies.

zoossh

Senior Member

Travel: Cold weather accessories

Gloves

Japan - Axesquin

Winter clothing

Sweden - Klattermusen

Travel: Outdoor accessories

Sleeping bags

Sweden - Klattermusen

Resting accessories

USA - Thermarest under Cascade Designs

USA - Mountain Safety Research

Travel: Trekking accessories

Walking sticks

USA - Tracks under Cascade Designs

Multitools

Switzerland - Victorinox

Hydration packs

Platypus under Cascade Designs

Travel: Weather measuring accessories

Germany - Minox

Travel: General accessories

USA - Kinesis

.

Gloves

Japan - Axesquin

Winter clothing

Sweden - Klattermusen

Travel: Outdoor accessories

Sleeping bags

Sweden - Klattermusen

Resting accessories

USA - Thermarest under Cascade Designs

USA - Mountain Safety Research

Travel: Trekking accessories

Walking sticks

USA - Tracks under Cascade Designs

Multitools

Switzerland - Victorinox

Hydration packs

Platypus under Cascade Designs

Travel: Weather measuring accessories

Germany - Minox

Travel: General accessories

USA - Kinesis

.

zoossh

Senior Member

Product Listing: Digital systems other than DSLR/TLR/PNS

Medium format: AF camera module for film back

Japan - Mamiya マミヤ・オーピー 645-AFD with Mamiya 645 AF bayonet mount and limited M645 mount

Medium format module: AF SLR 6.0" x 4.5" (dual compatilibility for digital/film back)

Japan - Mamiya マミヤ・オーピー 645-AFD-II with Mamiya 645 AF bayonet mount and limited M645 mount

Germany - Carl Zeiss Ikon Contax 645 AF Digital with proprietary lens mount and MAM-1 adaptor to Hasselblad C, CF, CFE, CFI, F and FE fittings.

Medium format module: AF SLR 6.0" x 6.0" (dual compatilibility for digital/film back)

Germany - Rollei Franke & Heidecke Rolleiflex 6008 AF & Integral 2 (acquired by Schneider Kreuznach in 1987)

Germany - Rollei Franke & Heidecke Rolleiflex Hy6 with Rollei bayonet mount compatible with System-6000 lenses, also cover 4.5 x 6 cm format (acquired by Schneider Kreuznach in 1987)

Medium format module: MF (dual compatilibility for digital/film back)

Switzerland - Pignons Alpa

Medium format: AF view dual compatilibility for digital/film back

Japan - Mamiya マミヤ・オーピー RZ67 Pro I and Pro II with special bayonet mount (need interface plate for digital backs)

Japan - Mamiya マミヤ・オーピー RZ67 Pro IID (home), (B&H) with special bayonet mount

Japan - Mamiya マミヤ・オーピー RB67 (RB for revolving back) Pro, Pro-S and Pro-SD MF SLR with Mamiya RB breech-lock bayonet mount

Medium-Large format system: Digital integrates or Separate view-and-back

Japan - Mamiya マミヤ・オーピー (usa), (uk) fronts/backs/integrated

USA - Leaf backs (now under Kodak)

USA - Kodak DCS Pro Back (discontinued), (review from luminous-landscape)

USA - Mega-vision backs

USA - Better light backs

Switzerland - Sinar (now under German Jenoptik)

Sweden - Hasselblad (SE in english), Medium format Digital backs

Sweden - 503CWD, square medium format MF DSLR with CFE, CFi, and FE mount

Denmark - Phase One digital backs

Denmark - Imacon Ixpress digital backs (review from luminous-landscape) acquired by Hasselblad in 2004

Germany - Carl Zeiss Ikon Contax 645 (usa) fronts

Germany - Leica R system (usa) fronts & backs

.

Medium format: AF camera module for film back

Japan - Mamiya マミヤ・オーピー 645-AFD with Mamiya 645 AF bayonet mount and limited M645 mount

Medium format module: AF SLR 6.0" x 4.5" (dual compatilibility for digital/film back)

Japan - Mamiya マミヤ・オーピー 645-AFD-II with Mamiya 645 AF bayonet mount and limited M645 mount

Germany - Carl Zeiss Ikon Contax 645 AF Digital with proprietary lens mount and MAM-1 adaptor to Hasselblad C, CF, CFE, CFI, F and FE fittings.

Medium format module: AF SLR 6.0" x 6.0" (dual compatilibility for digital/film back)

Germany - Rollei Franke & Heidecke Rolleiflex 6008 AF & Integral 2 (acquired by Schneider Kreuznach in 1987)

Germany - Rollei Franke & Heidecke Rolleiflex Hy6 with Rollei bayonet mount compatible with System-6000 lenses, also cover 4.5 x 6 cm format (acquired by Schneider Kreuznach in 1987)

Medium format module: MF (dual compatilibility for digital/film back)

Switzerland - Pignons Alpa

Medium format: AF view dual compatilibility for digital/film back

Japan - Mamiya マミヤ・オーピー RZ67 Pro I and Pro II with special bayonet mount (need interface plate for digital backs)

Japan - Mamiya マミヤ・オーピー RZ67 Pro IID (home), (B&H) with special bayonet mount

Japan - Mamiya マミヤ・オーピー RB67 (RB for revolving back) Pro, Pro-S and Pro-SD MF SLR with Mamiya RB breech-lock bayonet mount

Medium-Large format system: Digital integrates or Separate view-and-back

Japan - Mamiya マミヤ・オーピー (usa), (uk) fronts/backs/integrated

USA - Leaf backs (now under Kodak)

USA - Kodak DCS Pro Back (discontinued), (review from luminous-landscape)

USA - Mega-vision backs

USA - Better light backs

Switzerland - Sinar (now under German Jenoptik)

Sweden - Hasselblad (SE in english), Medium format Digital backs

Sweden - 503CWD, square medium format MF DSLR with CFE, CFi, and FE mount

Denmark - Phase One digital backs

Denmark - Imacon Ixpress digital backs (review from luminous-landscape) acquired by Hasselblad in 2004

Germany - Carl Zeiss Ikon Contax 645 (usa) fronts

Germany - Leica R system (usa) fronts & backs

.

zoossh

Senior Member

Medium format accessories

Sweden - Hasselblad Introduction, (B+H) Film magazines and back

Sweden - Hasselblad Introduction, (B+H) Film motorized winder and accessories

camera to back adaptor

Italy - Silvestri 6x6”

Switzerland - Sinar

View and bellow for digital/dual backs

Germany - Rollei

Italy - Silvestri

Sweden - Hasselblad H2 dual platform view (AF with HC lens mount or with CF adaptor) (B&H)

Japan - Komamura Horseman 駒村 LD Pro (for Hasselblad V & H, Mamiya 645 backs, Canon EOS and Nikon F bodies, and for Linhof, Horseman, Hasselblad, Mamiya and Pentax medium/large format lens)

Japan - Komamura Horseman 駒村 SWD II Pro (MF, for Hasselblad V & H, Mamiya 645 & Contax 645 backs, and for SW lens)

.

.

Sweden - Hasselblad Introduction, (B+H) Film magazines and back

Sweden - Hasselblad Introduction, (B+H) Film motorized winder and accessories

camera to back adaptor

Italy - Silvestri 6x6”

Switzerland - Sinar

View and bellow for digital/dual backs

Germany - Rollei

Italy - Silvestri

Sweden - Hasselblad H2 dual platform view (AF with HC lens mount or with CF adaptor) (B&H)

Japan - Komamura Horseman 駒村 LD Pro (for Hasselblad V & H, Mamiya 645 backs, Canon EOS and Nikon F bodies, and for Linhof, Horseman, Hasselblad, Mamiya and Pentax medium/large format lens)

Japan - Komamura Horseman 駒村 SWD II Pro (MF, for Hasselblad V & H, Mamiya 645 & Contax 645 backs, and for SW lens)

.

.

zoossh

Senior Member

Large format camera

View camera, Field camera

Japan - Horseman LX 5" x 4"

USA - Eastman Kodak View Camera No. 2-D

USA - Deardorff (wood)

USA - K. B. Canham (wood)

UK - Gandolfi (wood)

The Netherlands - Cambo (now under Calumet from USA)

.

View camera, Field camera

Japan - Horseman LX 5" x 4"

USA - Eastman Kodak View Camera No. 2-D

USA - Deardorff (wood)

USA - K. B. Canham (wood)

UK - Gandolfi (wood)

The Netherlands - Cambo (now under Calumet from USA)

.

zoossh

Senior Member

Product Listing: Special camera system

Panaroma camera 全景照相机: digital and film

Japan - Fujifilm GX617 film rangefinder (review by James Hoye, info not found at Fujifilm)

Japan - Komamura Horseman 駒村 jp, us, view and bellow for digital backs, view and film backs.

- SW617 pro panoramic camera with interchangeable Schneider and Rodenstock SW lenses, viewfinder and film backs (6x12 and 6x17)

- SW612 and SW612 pro panoramic camera with interchangeable Rodenstock SW lenses and film backs (6x7, 6x9 and 6x12)

Switzerland - Seitz digital/film panaroma body components

Germany - Kamera Werk Dresden Noblex film panaroma camera and accessories

USA/China - Panflex 潘福莱 and Widepan (made by Phenix 南昌凤凰 in China) film panaroma camera

Special effects & special camera

Austria - Lomo's lomography shop (US)

HK - Holga's Holgamods (US)

USA - Polaroid (sg)

Canada - Point Grey

Japan - Fujipet, gallery

Twin lens reflex camera (wikipedia)

Advantage: larger film format, Disadvantage: slow to use, parallax, reversed viewfinder

Germany - Rollei Rolleiflex (acquired by Schneider Kreuznach in 1987)

Germany - Rollei Franke & Heidecke medium format film-only 6" x 6 " fixed lens TLR Rolleiflex 4.0 FW (f/4, wide 50mm) and Rolleiflex 4.0 FT (f/4, tele 135mm) with Schneider Kreuznach lens

Germany - Rollei Franke & Heidecke medium format film-only 6" x 6 " fixed lens TLR Rolleiflex 2.8 FX (f/2.8, 80mm) with Carl Zeiss Ikon lens

Japan - Yashica ヤシカ 6x6" film fixed lens TLR with Tomioka (branded as Yashica) lens

Japan - Endo 遠藤 Pigeonピジョン 6x6" film fixed lens TLR (made by Yashica and later Shinano 信濃光機 with Tomioka lens

with Tomioka lens

.

Panaroma camera 全景照相机: digital and film

Japan - Fujifilm GX617 film rangefinder (review by James Hoye, info not found at Fujifilm)

Japan - Komamura Horseman 駒村 jp, us, view and bellow for digital backs, view and film backs.

- SW617 pro panoramic camera with interchangeable Schneider and Rodenstock SW lenses, viewfinder and film backs (6x12 and 6x17)

- SW612 and SW612 pro panoramic camera with interchangeable Rodenstock SW lenses and film backs (6x7, 6x9 and 6x12)

Switzerland - Seitz digital/film panaroma body components

Germany - Kamera Werk Dresden Noblex film panaroma camera and accessories

USA/China - Panflex 潘福莱 and Widepan (made by Phenix 南昌凤凰 in China) film panaroma camera

Special effects & special camera

Austria - Lomo's lomography shop (US)

HK - Holga's Holgamods (US)

USA - Polaroid (sg)

Canada - Point Grey

Japan - Fujipet, gallery

Twin lens reflex camera (wikipedia)

Advantage: larger film format, Disadvantage: slow to use, parallax, reversed viewfinder

Germany - Rollei Rolleiflex (acquired by Schneider Kreuznach in 1987)

Germany - Rollei Franke & Heidecke medium format film-only 6" x 6 " fixed lens TLR Rolleiflex 4.0 FW (f/4, wide 50mm) and Rolleiflex 4.0 FT (f/4, tele 135mm) with Schneider Kreuznach lens

Germany - Rollei Franke & Heidecke medium format film-only 6" x 6 " fixed lens TLR Rolleiflex 2.8 FX (f/2.8, 80mm) with Carl Zeiss Ikon lens

Japan - Yashica ヤシカ 6x6" film fixed lens TLR with Tomioka (branded as Yashica) lens

Japan - Endo 遠藤 Pigeonピジョン 6x6" film fixed lens TLR (made by Yashica and later Shinano 信濃光機

.

zoossh

Senior Member

Product Listing: Compact/Prosumer accessories

Compact camera focal-length-conversion lens/filters/adapter rings

Japan: Fujifilm Finepix and other compacts uk, jp

Japan: Matsushita National Panasonic

Japan: Nikon Coolpix sg

Japan: Olympus sg

Japan: Sony sg

Japan: Kenko

Japan: Yoshida Raynox

Japan: Toda Seiko Digital King

Taiwan: Giottos w

USA: Lensbabies w

USA: Peak optics

USA: Zhumell

USA: Sakar Tele/wide angle convertor, rings & filters

Czech: Meopta

Germany: Century Precision Optics, now under Schneider

Compact camera underwater housing

Japan: Fujifilm uk, us, jp

Japan: Sony sg

Compact camera casing

Japan: Fujifilm uk, us, jp

Japan: Sony sg

Compact camera power

Japan: Sony sg

Compact camera flash

Japan: Sony sg

.

Compact camera focal-length-conversion lens/filters/adapter rings

Japan: Fujifilm Finepix and other compacts uk, jp

Japan: Matsushita National Panasonic

Japan: Nikon Coolpix sg

Japan: Olympus sg

Japan: Sony sg

Japan: Kenko

Japan: Yoshida Raynox

Japan: Toda Seiko Digital King

Taiwan: Giottos w

USA: Lensbabies w

USA: Peak optics

USA: Zhumell

USA: Sakar Tele/wide angle convertor, rings & filters

Czech: Meopta

Germany: Century Precision Optics, now under Schneider

Compact camera underwater housing

Japan: Fujifilm uk, us, jp

Japan: Sony sg

Compact camera casing

Japan: Fujifilm uk, us, jp

Japan: Sony sg

Compact camera power

Japan: Sony sg

Compact camera flash

Japan: Sony sg

.

zoossh

Senior Member

Basic Concepts: Understanding of basic photography

In completing basic photography, it can be categorised generally into 3 sections.

1. aesthetic appreciation and vision.

2. technical pre-processing of light before collection by digital sensor

3. technical post-processing of data and reproduction on screen display or printout.

The categorisation helps in concentrating what one is deficient in, as well as to address to what is the bottleneck of one's work, of which one precedes the other, with the 1st point being the most important, followed by point 2 and 3.

Distributing the importance of various factors also helps in eliminating obscession with a single factor, very often masking the photographer's ability to improve. This also help to organise the various aspects of photography for step wise understanding.

4. Summary of "Various photographic aspects"

1. Various aspects in photography

1.1 Interplay between the user, the equipment and the subject.

1.2 The interplay relationship between equipment and subject - light and space

1.3 Technical, situational and handling factors

2. Components of Light

2.1 Tones, hues and saturation

2.2 Exposure and tones

2.3 Quality of light and colors

2.4 Light interplays with space - direction of light

2.5 Light interplays with time - motion

3. Exposure and tones

3.1 Overview & foreword regarding exposure

3.2 Exposure values (EV)

3.3 Tonal range and dynamic range

3.4 Shadows, midtones, highlight

3.5 Exposure latitude and details

3.6 Ability to push back details

3.7 Exposure distribution (histogram) and composition

3.8 Exposure and its zonal significance

3.9 Frame intensity and inverse square law

4. Quality of light and colors

4.1 About colors

4.2 Electromagnetic spectum and wavelength

4.3 Addictive and subtractive color mixing

4.4 The color wheel and its uses

4.5 Different light source and color temperature

4.6 Color balance and white balance

4.7 Hues and skin hues

4.8 Saturation

4.9 Reference of Colors nomenclature

5. Colors in in-camera and post-camera processing

5.1 RAW and color balance

5.2 Color color gamut, spectrum and space: Adobe RGB v.s. sRGB

5.3 For the color blinds

6. Types of lights

6.1 Natural, ambient and artificial lights

6.2 Diurnal changes

6.3 Seasonal changes

6.4 Atmospheric factors

6.5 Incandescence

6.6 Fluorescence & Neon

6.7 Mixed lights

6.8 Continuous and flash lights

6.9 Reflection and bounce

.

In completing basic photography, it can be categorised generally into 3 sections.

1. aesthetic appreciation and vision.

2. technical pre-processing of light before collection by digital sensor

3. technical post-processing of data and reproduction on screen display or printout.

The categorisation helps in concentrating what one is deficient in, as well as to address to what is the bottleneck of one's work, of which one precedes the other, with the 1st point being the most important, followed by point 2 and 3.

Distributing the importance of various factors also helps in eliminating obscession with a single factor, very often masking the photographer's ability to improve. This also help to organise the various aspects of photography for step wise understanding.

4. Summary of "Various photographic aspects"

1. Various aspects in photography

1.1 Interplay between the user, the equipment and the subject.

1.2 The interplay relationship between equipment and subject - light and space

1.3 Technical, situational and handling factors

2. Components of Light

2.1 Tones, hues and saturation

2.2 Exposure and tones

2.3 Quality of light and colors

2.4 Light interplays with space - direction of light

2.5 Light interplays with time - motion

3. Exposure and tones

3.1 Overview & foreword regarding exposure

3.2 Exposure values (EV)

3.3 Tonal range and dynamic range

3.4 Shadows, midtones, highlight

3.5 Exposure latitude and details

3.6 Ability to push back details

3.7 Exposure distribution (histogram) and composition

3.8 Exposure and its zonal significance

3.9 Frame intensity and inverse square law

4. Quality of light and colors

4.1 About colors

4.2 Electromagnetic spectum and wavelength

4.3 Addictive and subtractive color mixing

4.4 The color wheel and its uses

4.5 Different light source and color temperature

4.6 Color balance and white balance

4.7 Hues and skin hues

4.8 Saturation

4.9 Reference of Colors nomenclature

5. Colors in in-camera and post-camera processing

5.1 RAW and color balance

5.2 Color color gamut, spectrum and space: Adobe RGB v.s. sRGB

5.3 For the color blinds

6. Types of lights

6.1 Natural, ambient and artificial lights

6.2 Diurnal changes

6.3 Seasonal changes

6.4 Atmospheric factors

6.5 Incandescence

6.6 Fluorescence & Neon

6.7 Mixed lights

6.8 Continuous and flash lights

6.9 Reflection and bounce

.

zoossh

Senior Member

1. Various aspects in photography

1.1 Interplay between the user, the equipment and the subject.

Is photography all about the photographer?

Some will say it is all about who is behind the camera (the photographer), but this cannot be taken figuratively.

I have a different opinion though. It might have been due to my profession where some of them sometimes see themselves as a higher being while some others took a more humble attitude of being merely a cobbler doing what we can do at the mercy of nature. The arrogance of anyone freaks me out, and that is regardless of how good he is in terms of competency. Similarly in photography, I believe no one should see themselves as being the sole importance. Without our tools and our subjects, we cannot make much achievements, akin to cooks without good ingredients and a suitable and well equipped kitchen. Every single factor makes a difference.

As a DSLR user who practise infrequent shooting for less than 2yrs, perhaps I should stop calling myself a newbie but rather a beginner. I do not have enough qualifications to challenge against the seniors, but they may not necessarily understand what a newbie need. Other than having the equipment and tools, one need to know how to use it. Having the equipment and entirely not knowing how to do it would be as good as not having it. But more often it is not about entirely not knowing how to use it, but rather on not knowing how to fully optimise it, hence the usefulness of a better equipment may be minimal to limited to suboptimal benefits, which may not outweigh the cost of acquiring it, which is not exactly a cheap hobby like drinking tea and playing badminton but is still cheaper than hobbies such as car modification and antiques. However, not having the equipments and not having an alternative equipment that can do the same thing, the user means nothing.

Photography is probably, in my opinion, an interplay between 3 components, the user, the equipment and the subject.

The natural tendencies of a newbie is finding too much information and not knowing how to start, where to start, where to read, and frustrations with the current equipment may end up with wishes in getting better equipments to get better results. Of cos, better equipments does not usually just have better specifications and abilities in just one factors, but many factors, hence there would be easier use and better results in a number of cases. However, it depends on whether the newbie find the right problem and got it solved via the better equipment with easier use on that problem. If the bottleneck lies in a factor that require the user's understanding and regulation, and the user cannot better utitilise a better equipment, he may go back to square one. The suggestion is to try to find out what's wrong and not take it for granted that a better camera or lens will solve the problem, moreover not knowing what's wrong means that there is a likelihood of getting the wrong equipments and not seeing any difference. Alternatively one can save money by improving on one's knowledge and skill and noting that he can actually cope with a lower end camera and getting great results too.

Sometimes advices from professionals and experts are not necessarily useful and pragmatic. Just like the local Nepalese porter that carries easily 15-30kg of stuff up and down the mountains treks with flip-flop slippers. If you listen to their advice and do it that way instead of getting proper footwear, you may hurt yourself. The natural tendency of professionals and experts is an ego around the user factor, because they have achieved that, while newbies dun. While they can get around the obstacles more easily, the same cannot be expected of a newbie. One cannot expect a basic pinhole camera to capture fast moving action shots, or a wide angle lens to capture a bird 100m away. The right equipment is required for the various type of vision, and sometimes the equipment can limit the user's ability in certain aspects. Alternatively, the right equipment either enhances user ability or makes it easier for the user.

And while the newbie mentality is to go the easy way out, the expert mentality is to go through the hard way. Of cos, there are exceptions in both parties, but a certain truth is there in both. In the olden times, there is less automation and good photography comes a long time with practice and manual control. It comes with a no choice situation. Today, there is increasing automation, from autofocusing, autoexposure, auto-color balance, automatic modes that takes over depth of field and shutter duration control, but they haven't take over composition yet, and they can never take over the physical spatial relationship between the photographer & camera to the subject. Even so, we need to know about the automation to make them do the job for us, or know how to override the settings to our preference. If there is a shortest and fastest way, there is no reason why one must persist in going through the longest and slowest way, except for one thing, the good and the best need not necessarily be on either pathways, and one got to explore for it.

And one last thing always left out of equation - the subject. You can't shoot an aurora in Singapore. And you can't make a totally ugly subject without potential and without makeup into a beautiful model. Photographers are not gods, not even when they are shooting. And that is one thing that one should bear in mind. Give some credits to the subject and the equipments, and dun just bash the idea of seeking out better subjects and equipments, as if everything is about the user.

1.2 The interplay relationship between equipment and subject - light and space

Is photography all about light?

Light is an indispensable factor and is very critical. And it is very likely one would initially think of the factor of light as simply exposure. But light is more than that and we will talk about it later.

There is also space, which is inter-related with the light factor but is not reliant on light. The distance between the sensor, the optical centre of the lens, and to the subject and all the contents in the frame surrounding the subject is a spatial relationship that is defined within an angle of view. This relationship is dependent on where the photographer stands and placed his camera, and dependent on the physical specifications of his camera body/lens and the focal length he set. On top of that, when and what the contents of the frame shows, depends on the situation, which could be a matter of luck, patience or intelligent planning.

Like i say before, photography is, in my opinion, an interplay between 3 components, the user, the equipment and the subject. That interplay between the three components are linked together by space and light. Once the spatial relationship is established, the correct focusing will bring the light from the frame onto the sensor, point to point, to achieve sharpness.

.

1.1 Interplay between the user, the equipment and the subject.

Is photography all about the photographer?

Some will say it is all about who is behind the camera (the photographer), but this cannot be taken figuratively.

I have a different opinion though. It might have been due to my profession where some of them sometimes see themselves as a higher being while some others took a more humble attitude of being merely a cobbler doing what we can do at the mercy of nature. The arrogance of anyone freaks me out, and that is regardless of how good he is in terms of competency. Similarly in photography, I believe no one should see themselves as being the sole importance. Without our tools and our subjects, we cannot make much achievements, akin to cooks without good ingredients and a suitable and well equipped kitchen. Every single factor makes a difference.

As a DSLR user who practise infrequent shooting for less than 2yrs, perhaps I should stop calling myself a newbie but rather a beginner. I do not have enough qualifications to challenge against the seniors, but they may not necessarily understand what a newbie need. Other than having the equipment and tools, one need to know how to use it. Having the equipment and entirely not knowing how to do it would be as good as not having it. But more often it is not about entirely not knowing how to use it, but rather on not knowing how to fully optimise it, hence the usefulness of a better equipment may be minimal to limited to suboptimal benefits, which may not outweigh the cost of acquiring it, which is not exactly a cheap hobby like drinking tea and playing badminton but is still cheaper than hobbies such as car modification and antiques. However, not having the equipments and not having an alternative equipment that can do the same thing, the user means nothing.

Photography is probably, in my opinion, an interplay between 3 components, the user, the equipment and the subject.

The natural tendencies of a newbie is finding too much information and not knowing how to start, where to start, where to read, and frustrations with the current equipment may end up with wishes in getting better equipments to get better results. Of cos, better equipments does not usually just have better specifications and abilities in just one factors, but many factors, hence there would be easier use and better results in a number of cases. However, it depends on whether the newbie find the right problem and got it solved via the better equipment with easier use on that problem. If the bottleneck lies in a factor that require the user's understanding and regulation, and the user cannot better utitilise a better equipment, he may go back to square one. The suggestion is to try to find out what's wrong and not take it for granted that a better camera or lens will solve the problem, moreover not knowing what's wrong means that there is a likelihood of getting the wrong equipments and not seeing any difference. Alternatively one can save money by improving on one's knowledge and skill and noting that he can actually cope with a lower end camera and getting great results too.

Sometimes advices from professionals and experts are not necessarily useful and pragmatic. Just like the local Nepalese porter that carries easily 15-30kg of stuff up and down the mountains treks with flip-flop slippers. If you listen to their advice and do it that way instead of getting proper footwear, you may hurt yourself. The natural tendency of professionals and experts is an ego around the user factor, because they have achieved that, while newbies dun. While they can get around the obstacles more easily, the same cannot be expected of a newbie. One cannot expect a basic pinhole camera to capture fast moving action shots, or a wide angle lens to capture a bird 100m away. The right equipment is required for the various type of vision, and sometimes the equipment can limit the user's ability in certain aspects. Alternatively, the right equipment either enhances user ability or makes it easier for the user.

And while the newbie mentality is to go the easy way out, the expert mentality is to go through the hard way. Of cos, there are exceptions in both parties, but a certain truth is there in both. In the olden times, there is less automation and good photography comes a long time with practice and manual control. It comes with a no choice situation. Today, there is increasing automation, from autofocusing, autoexposure, auto-color balance, automatic modes that takes over depth of field and shutter duration control, but they haven't take over composition yet, and they can never take over the physical spatial relationship between the photographer & camera to the subject. Even so, we need to know about the automation to make them do the job for us, or know how to override the settings to our preference. If there is a shortest and fastest way, there is no reason why one must persist in going through the longest and slowest way, except for one thing, the good and the best need not necessarily be on either pathways, and one got to explore for it.

And one last thing always left out of equation - the subject. You can't shoot an aurora in Singapore. And you can't make a totally ugly subject without potential and without makeup into a beautiful model. Photographers are not gods, not even when they are shooting. And that is one thing that one should bear in mind. Give some credits to the subject and the equipments, and dun just bash the idea of seeking out better subjects and equipments, as if everything is about the user.

1.2 The interplay relationship between equipment and subject - light and space

Is photography all about light?

Light is an indispensable factor and is very critical. And it is very likely one would initially think of the factor of light as simply exposure. But light is more than that and we will talk about it later.

There is also space, which is inter-related with the light factor but is not reliant on light. The distance between the sensor, the optical centre of the lens, and to the subject and all the contents in the frame surrounding the subject is a spatial relationship that is defined within an angle of view. This relationship is dependent on where the photographer stands and placed his camera, and dependent on the physical specifications of his camera body/lens and the focal length he set. On top of that, when and what the contents of the frame shows, depends on the situation, which could be a matter of luck, patience or intelligent planning.

Like i say before, photography is, in my opinion, an interplay between 3 components, the user, the equipment and the subject. That interplay between the three components are linked together by space and light. Once the spatial relationship is established, the correct focusing will bring the light from the frame onto the sensor, point to point, to achieve sharpness.

.

zoossh

Senior Member

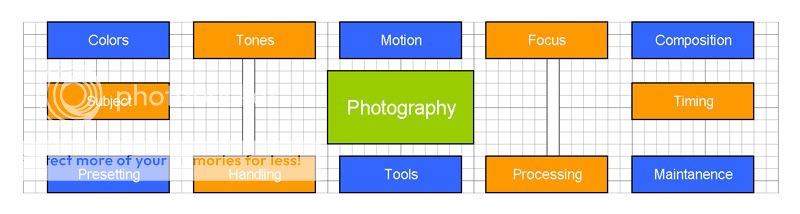

1.3 Technical, situational and handling factors

What are the factors about photography that one need to learn about?

I came up with the above diagram to show the various aspects in handling photography. I try to be comprehensive, although it may not be so, i guess all the above listed have encompassed what i have learnt slightly over a year.

The first row shows technical factors of light and space that we can control.

The 2nd row are situational factors that is not always within our control.

The 3rd row refers to the practical handling process from on the camera, on top of the camera to after the camera shooting.

.

What are the factors about photography that one need to learn about?

I came up with the above diagram to show the various aspects in handling photography. I try to be comprehensive, although it may not be so, i guess all the above listed have encompassed what i have learnt slightly over a year.

The first row shows technical factors of light and space that we can control.

The 2nd row are situational factors that is not always within our control.

The 3rd row refers to the practical handling process from on the camera, on top of the camera to after the camera shooting.

.

zoossh

Senior Member

1.4 What is there to note in the light?

The first thing one comes to think of, may be the exposure, which in general refers to the overall amount of light and the overall effect one sees in the final picture. This component of light is about the tones, and the distribution of it. More description and factors pertaining to that will be described more in page 3.

I'm still at the moment of reading through a book i just got from Riceball, "Lighting" by David Prakel. And i find that having a book help to consolidate what you have learnt, and know more about what you thought you know but you didn't, or think more about what you never think of.

After some thoughts, i thought that light can be subdivided into exposure, colors and direction, with motion as a semi-related component.

What we see is in an electromagnetic spectrum of energy at different wavelengths, and that is visible light. Our light source comes from the sun, altered by the sky and atmosphere, reflected by the clouds and reflected by the surroundings - this is natural light. Then we get ambience light (or found light, or available light) from whatever light source that is around, from the tungsten, fluorescent to the incandescent lights around from fire and electricity lights. And lastly photographic light from our flashes, altered by diffusers and reflectors.

All these mixed light, as mentioned later, can have a few different properties.

The intensity of light, will affect tones and resultant exposure, which means how bright and how dark things are. This setting is tightly related to many critical function of the camera and is a concept that needs to be grasp before you get some control over your DSLR.

The wavelength of light, will affect colors or hues, and affects the mood of the picture as these colors are directly associated with the way we identify things and time, for example the red apple as the apple, and the golden ray of sunset as the sunset. This is often what we call the quality of light which translates to the differential wavelength involved and the resultant color temperature. The understanding of the color balance is your next step towards making pictures that evokes emotions when they correlate to our vision and what we associates through hues. We need to understand this additional properties of light in order to understand the use of filters and the settings of white balance. In particular, skin tone (although named tone, is more often used to describe the overall color/tonal status) of human beings is the one thing that our eyes can immediately picked up subtle deviation. With trained eyes, one can easily see a pale person with anaemia, a sallow person with renal failure and a jaundiced person with liver problems. With untrained eyes, anyone including your grandparents, can tell you this and that person looks too green, too red or too yellow.

And the direction of light, is of much importance often less talked about. This is a complicated factor tightly related to the spatial relationship. One need to have understanding of the location, season, time of day, weather, altitude and surroundings when it comes to natural light. We make use of contours and shadows to increase depth and interest. And we watch for exposure in the highlights and shadows, which is actually a factor of how the directional light is altered by spatial relationships of the photographer in the surrounding, the various components in the frame, and how the components related to the direction of the light. Also polarisation of light using a polariser is dependent on the direction of light. Even in a studio setting, use of fill flash, diffusers and reflectors depends on professional knowledge and understanding of both the above two factors and the last factor regarding the direction of light.

And motion is an intermediate factor that links light and space. But since we talk about light first, we talk about motion before spatial composition. Our eyes is on video mode, which constantly processed inputs and send to the brain. Our camera is not. Basically photography is based on collection of light on the same sensor over a certain duration, as such, we capture motion on pictures which is different from what we actually see with our eyes. The magnitude of movement in the overall frame size depends on the dimension of the subject and the background, as well as the subject-camera distance or focal length with angle of view. The velocity of movement and the shutter duration will relate whether we see a frozen moment or a streak of regular motion. Avoidance of handshake is critical for sharp pictures to optimise what the focus and depth of field offers, and this is dependent on this factor of motion, which is a factor of differential dimension and differential speed. Undesired subject motion adds further to the effects of handshake, but subject motion is a factor based on the subject speed v.s. shutter duration, and can occur even if there is no handshake. So tripod may not solve the problem.

The above shot is taken on a tripod at Kota Kinabalu. Although support is stable and with proper focus, the largest aperture of f/1.8 and the highest ISO of 1600 is still not enough to give a fast enough speed at 1/3 secs. There is support and timer (but no mirror lockup) to eliminate handshake but the boat is floating on water and constantly moving, thereby giving a soft image, as the focused part is moving, and the background is affected by limited depth of field.

.

The first thing one comes to think of, may be the exposure, which in general refers to the overall amount of light and the overall effect one sees in the final picture. This component of light is about the tones, and the distribution of it. More description and factors pertaining to that will be described more in page 3.

I'm still at the moment of reading through a book i just got from Riceball, "Lighting" by David Prakel. And i find that having a book help to consolidate what you have learnt, and know more about what you thought you know but you didn't, or think more about what you never think of.

After some thoughts, i thought that light can be subdivided into exposure, colors and direction, with motion as a semi-related component.

What we see is in an electromagnetic spectrum of energy at different wavelengths, and that is visible light. Our light source comes from the sun, altered by the sky and atmosphere, reflected by the clouds and reflected by the surroundings - this is natural light. Then we get ambience light (or found light, or available light) from whatever light source that is around, from the tungsten, fluorescent to the incandescent lights around from fire and electricity lights. And lastly photographic light from our flashes, altered by diffusers and reflectors.

All these mixed light, as mentioned later, can have a few different properties.

The intensity of light, will affect tones and resultant exposure, which means how bright and how dark things are. This setting is tightly related to many critical function of the camera and is a concept that needs to be grasp before you get some control over your DSLR.

The wavelength of light, will affect colors or hues, and affects the mood of the picture as these colors are directly associated with the way we identify things and time, for example the red apple as the apple, and the golden ray of sunset as the sunset. This is often what we call the quality of light which translates to the differential wavelength involved and the resultant color temperature. The understanding of the color balance is your next step towards making pictures that evokes emotions when they correlate to our vision and what we associates through hues. We need to understand this additional properties of light in order to understand the use of filters and the settings of white balance. In particular, skin tone (although named tone, is more often used to describe the overall color/tonal status) of human beings is the one thing that our eyes can immediately picked up subtle deviation. With trained eyes, one can easily see a pale person with anaemia, a sallow person with renal failure and a jaundiced person with liver problems. With untrained eyes, anyone including your grandparents, can tell you this and that person looks too green, too red or too yellow.

And the direction of light, is of much importance often less talked about. This is a complicated factor tightly related to the spatial relationship. One need to have understanding of the location, season, time of day, weather, altitude and surroundings when it comes to natural light. We make use of contours and shadows to increase depth and interest. And we watch for exposure in the highlights and shadows, which is actually a factor of how the directional light is altered by spatial relationships of the photographer in the surrounding, the various components in the frame, and how the components related to the direction of the light. Also polarisation of light using a polariser is dependent on the direction of light. Even in a studio setting, use of fill flash, diffusers and reflectors depends on professional knowledge and understanding of both the above two factors and the last factor regarding the direction of light.

And motion is an intermediate factor that links light and space. But since we talk about light first, we talk about motion before spatial composition. Our eyes is on video mode, which constantly processed inputs and send to the brain. Our camera is not. Basically photography is based on collection of light on the same sensor over a certain duration, as such, we capture motion on pictures which is different from what we actually see with our eyes. The magnitude of movement in the overall frame size depends on the dimension of the subject and the background, as well as the subject-camera distance or focal length with angle of view. The velocity of movement and the shutter duration will relate whether we see a frozen moment or a streak of regular motion. Avoidance of handshake is critical for sharp pictures to optimise what the focus and depth of field offers, and this is dependent on this factor of motion, which is a factor of differential dimension and differential speed. Undesired subject motion adds further to the effects of handshake, but subject motion is a factor based on the subject speed v.s. shutter duration, and can occur even if there is no handshake. So tripod may not solve the problem.

The above shot is taken on a tripod at Kota Kinabalu. Although support is stable and with proper focus, the largest aperture of f/1.8 and the highest ISO of 1600 is still not enough to give a fast enough speed at 1/3 secs. There is support and timer (but no mirror lockup) to eliminate handshake but the boat is floating on water and constantly moving, thereby giving a soft image, as the focused part is moving, and the background is affected by limited depth of field.

.

zoossh

Senior Member

2. Components of Light

2.1 Tones, hues and saturation

Another source of confusion. Surprising i derived some google results at Gemmology made easy relating to the Gemological Institute of America (GIA) with regards to description of their description of gem stones.

Tones (Luna) = lightness or darkness

There are 11 stages of tone, ranging from 0 (for colourless) through 10 (for black).

Hues = type of color

As quoted from above website, hue is the first impression we get when seeing colour, but then in it's purest opaque form. GIA uses 31 hues based on the RGB model (red-green-blue). There are over 16 million hues, the human eye however is not capable of distinguishing them all.

Saturation (Chroma) = purity or intensity of the hue

The GIA saturation scale goes from 1 to 6. The lowest grade meaning that the hue looks grayish (for cold colours like green and blue) or brownish for the warmer colours (like red, orange and yellow). The highest grade (6) is used for hues that are pure in their hue. We call that "vivid". From grade 4 onwards, there should be no gray/brown in the hue.

The issue is actually more apparent when we start to talk in depth about how you perceptualise exposure which can be often affected by colors. ALso they become important when you are doing photo enhancement. Get this kicked out of the way, and now we can start reading about exposure.

.

2.1 Tones, hues and saturation

Another source of confusion. Surprising i derived some google results at Gemmology made easy relating to the Gemological Institute of America (GIA) with regards to description of their description of gem stones.

Tones (Luna) = lightness or darkness

There are 11 stages of tone, ranging from 0 (for colourless) through 10 (for black).

Hues = type of color

As quoted from above website, hue is the first impression we get when seeing colour, but then in it's purest opaque form. GIA uses 31 hues based on the RGB model (red-green-blue). There are over 16 million hues, the human eye however is not capable of distinguishing them all.

Saturation (Chroma) = purity or intensity of the hue

The GIA saturation scale goes from 1 to 6. The lowest grade meaning that the hue looks grayish (for cold colours like green and blue) or brownish for the warmer colours (like red, orange and yellow). The highest grade (6) is used for hues that are pure in their hue. We call that "vivid". From grade 4 onwards, there should be no gray/brown in the hue.

The issue is actually more apparent when we start to talk in depth about how you perceptualise exposure which can be often affected by colors. ALso they become important when you are doing photo enhancement. Get this kicked out of the way, and now we can start reading about exposure.

.

zoossh

Senior Member

4. Quality of light and colors

4.1 About Colors

The theoretical part is rather important in terms of understanding technical aspects of

1. how colors are managed by the sensor,

2. how it is interpreted in terms of white balance and

3. how it is being output on screen and print

This may or may not be your main point of interest, but colors do play a role in composition, as many other things do.

The laymen tendency of seeing colors as a combined unit of hue and exposure

For laymen, there is an indistinguishable relationship between colors and tones. Colors are not just hues to many people, and became a combined function for hues and tones. When a dark yellow and a bright yellow is being put together side by side, despite of having the same hue but different exposure or tone, they will often be described of being different colors, or rather, if the same hue with vastly different exposure, when compared to two fairly similar hue with the same exposure, it is more likely that the former will be treated as distinctly different colors, while the latter are treated as closer colors that is hard to tell apart – in short, it is the difference in magnitude of either color or tone that tells things apart.

There is however the value of knowing them apart because they are controlled by different factors.

The uses of colors can be used in

1. contrasting color pattern

2. complimentary colors

3. complimentary colors versus backgrounds – earthy background, bright subject

4. standout colors

For further identification and naming of colors, check this out.

4.2 Electromagnetic spectum and wavelength

There is a few reasons why knowing a little about the science of light is useful for photography.

1. Understand what is color cast when people commented on your photograph going wrong.

2. Start to visualise natural and artificial light and applying the correct white balance settings.

3. Understand the color wheel and assist in color harmony if you do not have a natural instinct to colors

4. Apply filters to assist in enhancement of colors or tones.

Basically visible light comes in a range of energies and wavelength. When there is a balanced mixture of all energies range, they become white light.

When the incident light got refracted, the different components travelling at different speed and angle will separate and create a rainbow of seven major colors, with red at one end (bent the least) to violet (bent the most). This usually do not occur, but when present helps to tell us the different component of visible light. The red color has the lowest energy and the longest wavelength and low frequencies. The violet color has the highest energy and the shortest wavelength and high frequencies. At both ends, there are the infrared and ultraviolet spectrum (which is present in small amounts as compared to visible light that enters our atmosphere) that is not visible to our eyes but nevertheless affects our pictures if there are no measures to counteract it. On the other hand, utilising of these unseen spectrum also opens up another field of photography, in particularly the IR photography.

As visible light is basically electromagnetic waves of different energies, they invariably either penetrate, gets absorbed or gets reflected, at different proportions. What we see in our eyes are a mixture of incident, altered and reflected light.

Incident light from the sun appears white because they are so bright that all the components of the light reached us directly with little alteration by the atmosphere.

However, apart from that, most of the light we see in other parts of the sky and sea are reflected light. As red light are of low energy, they tend to be absorbed gradually with multiple reflection, eventually leaving the blue component. On the other hand, as blue light are more easily refracted, they are often bounced off in low angle light where the atmosphere is thicker, giving typical warm colors during dawn and dusk where the sun is low. For the same reason, dusty environment are red not just because the soil are red. These changes account for color casts, which means the overall light source are diffuse out by alterations (blue color bounced off by dust) and reflections (red color absorbed by everything else).

Last of all, for individual subjects appearing as different colors in our world, they absorbed most visible light component and reflect only one light component. If they absorbed everything, they appear black. If they reflect everything, they appear white. This is the case, assuming the incident light is white. If a color cast is present in the eventual light quality, all colored items also will reflect similar changes.

.

4.1 About Colors

The theoretical part is rather important in terms of understanding technical aspects of

1. how colors are managed by the sensor,

2. how it is interpreted in terms of white balance and

3. how it is being output on screen and print

This may or may not be your main point of interest, but colors do play a role in composition, as many other things do.

The laymen tendency of seeing colors as a combined unit of hue and exposure

For laymen, there is an indistinguishable relationship between colors and tones. Colors are not just hues to many people, and became a combined function for hues and tones. When a dark yellow and a bright yellow is being put together side by side, despite of having the same hue but different exposure or tone, they will often be described of being different colors, or rather, if the same hue with vastly different exposure, when compared to two fairly similar hue with the same exposure, it is more likely that the former will be treated as distinctly different colors, while the latter are treated as closer colors that is hard to tell apart – in short, it is the difference in magnitude of either color or tone that tells things apart.

There is however the value of knowing them apart because they are controlled by different factors.

The uses of colors can be used in

1. contrasting color pattern

2. complimentary colors

3. complimentary colors versus backgrounds – earthy background, bright subject

4. standout colors

For further identification and naming of colors, check this out.

4.2 Electromagnetic spectum and wavelength

There is a few reasons why knowing a little about the science of light is useful for photography.

1. Understand what is color cast when people commented on your photograph going wrong.

2. Start to visualise natural and artificial light and applying the correct white balance settings.

3. Understand the color wheel and assist in color harmony if you do not have a natural instinct to colors

4. Apply filters to assist in enhancement of colors or tones.

Basically visible light comes in a range of energies and wavelength. When there is a balanced mixture of all energies range, they become white light.

When the incident light got refracted, the different components travelling at different speed and angle will separate and create a rainbow of seven major colors, with red at one end (bent the least) to violet (bent the most). This usually do not occur, but when present helps to tell us the different component of visible light. The red color has the lowest energy and the longest wavelength and low frequencies. The violet color has the highest energy and the shortest wavelength and high frequencies. At both ends, there are the infrared and ultraviolet spectrum (which is present in small amounts as compared to visible light that enters our atmosphere) that is not visible to our eyes but nevertheless affects our pictures if there are no measures to counteract it. On the other hand, utilising of these unseen spectrum also opens up another field of photography, in particularly the IR photography.

As visible light is basically electromagnetic waves of different energies, they invariably either penetrate, gets absorbed or gets reflected, at different proportions. What we see in our eyes are a mixture of incident, altered and reflected light.

Incident light from the sun appears white because they are so bright that all the components of the light reached us directly with little alteration by the atmosphere.

However, apart from that, most of the light we see in other parts of the sky and sea are reflected light. As red light are of low energy, they tend to be absorbed gradually with multiple reflection, eventually leaving the blue component. On the other hand, as blue light are more easily refracted, they are often bounced off in low angle light where the atmosphere is thicker, giving typical warm colors during dawn and dusk where the sun is low. For the same reason, dusty environment are red not just because the soil are red. These changes account for color casts, which means the overall light source are diffuse out by alterations (blue color bounced off by dust) and reflections (red color absorbed by everything else).

Last of all, for individual subjects appearing as different colors in our world, they absorbed most visible light component and reflect only one light component. If they absorbed everything, they appear black. If they reflect everything, they appear white. This is the case, assuming the incident light is white. If a color cast is present in the eventual light quality, all colored items also will reflect similar changes.

.

zoossh

Senior Member

4.9 Reference for color nomenclature

More than 1,000 named colors for use in HTML Web page by John December

5. Colors in in-camera and post-camera processing

5.2 Color color gamut, spectrum and space: Adobe RGB v.s. sRGB

If one is confused and thinking of sorting out some definitions before the practical concerns, he may read this on gamut, color space, RGB color models and ICC profile.

And if one is confused and interested in the practical issues only, basically we are left with whether to use adobe RGB 1998 or sRGB color space to record our photo and to which profile are we to assign or convert during raw conversion and photo editing. With much confusion all the way, some of us have developed the thinking of for printing, choose adobe RGB 1998 and for web display, choose sRGB, or save raw in adobe RGB 1998, convert to jpeg as sRGB......

However, more reading shed some light on this issue. Some proponents support sRGB as a uniform format throughout. As such are their articles,

sRGB vs. ADOBE RGB 1998 by cambridge in color

sRGB vs. Adobe RGB by Ken Rockwell

Color Management Answers for Photoshop Elements

5.3 For the color blinds

As suggested by Celeste Shuen,

"Professional Photoshop: The Classic Guide to Color Correction (5th Edition) and Photoshop LAB Color: The Canyon Conundrum and Other Adventures in the Most Powerful Colorspace by Dan Margulis teach colour correction with numbers. The author mentioned at a number of places in these books that he trained a number of people who were colour-blind and the results ranged from acceptable to excellent.

From some of the libraries under NLB:You can also purchase them from Computer Book Centre at Funan Centre or Kinokuniya at Takashimaya. These books are expensive and are challenging to read. It is not uncommon to read these books more than once and I found it useful to refer to the study groups at Digital Grin when going through them."

The above might work. You can try if you are color blind. Do give feedback to help fellow forumers.

.

More than 1,000 named colors for use in HTML Web page by John December

5. Colors in in-camera and post-camera processing

5.2 Color color gamut, spectrum and space: Adobe RGB v.s. sRGB

If one is confused and thinking of sorting out some definitions before the practical concerns, he may read this on gamut, color space, RGB color models and ICC profile.

And if one is confused and interested in the practical issues only, basically we are left with whether to use adobe RGB 1998 or sRGB color space to record our photo and to which profile are we to assign or convert during raw conversion and photo editing. With much confusion all the way, some of us have developed the thinking of for printing, choose adobe RGB 1998 and for web display, choose sRGB, or save raw in adobe RGB 1998, convert to jpeg as sRGB......

However, more reading shed some light on this issue. Some proponents support sRGB as a uniform format throughout. As such are their articles,

sRGB vs. ADOBE RGB 1998 by cambridge in color

sRGB vs. Adobe RGB by Ken Rockwell

Color Management Answers for Photoshop Elements

5.3 For the color blinds

As suggested by Celeste Shuen,

"Professional Photoshop: The Classic Guide to Color Correction (5th Edition) and Photoshop LAB Color: The Canyon Conundrum and Other Adventures in the Most Powerful Colorspace by Dan Margulis teach colour correction with numbers. The author mentioned at a number of places in these books that he trained a number of people who were colour-blind and the results ranged from acceptable to excellent.

From some of the libraries under NLB:You can also purchase them from Computer Book Centre at Funan Centre or Kinokuniya at Takashimaya. These books are expensive and are challenging to read. It is not uncommon to read these books more than once and I found it useful to refer to the study groups at Digital Grin when going through them."

The above might work. You can try if you are color blind. Do give feedback to help fellow forumers.

.

zoossh

Senior Member

Basic concepts about optics

1. Optical lines

1.1 Light and image

1.2 Optic axis - object, lens, image

1.3 Focus - point to point divergence and convergence

2. Optical diagrams

2.1 A point to point diagram

2.2 An edge to edge diagram

2.3 A field to field diagram

2.4 Field and angle of view

2.5 The nodal point or optical centre

3. Focus

3.1 Focal point

3.2 Circle of confusion

3.3 Depth of field

3.4 Hyperfocal distance

4. Lens elements & properties

4.1 Simple lens setup and focus from infinity

4.2 Focal length

4.3 Thin Lens Equation - image distance and object distance

4.4 Both o and i > f

5. Image distance and its limits

5.1 image distance increase with focal length

5.2 limited maximum image distance limits maximum focal length

5.3 the mirror distance < image distance

5.4 retrofocus design

6. Subject distance and its limits

6.1 No limits to infinity

6.2 reciprocal relationship between image and object distance at fixed focal length

6.3 minimum object distance tally with maximum image distance

6.4 minimum focus distance

6.5 minimum working distance

7. The Concept of Frame

7.1 The borders of physical vision and photography

7.2 Eventual photographic output is two dimensional within a square or rectangular frame

7.3 Image circle versus the frame

7.4 The image frame versus the viewfinder frame.

8. The Concept of Size

8.1 Physical image size

8.2 Apparent image size in frame

8.3 Distance, circumference of view and size - Why does things gets smaller when further?

8.4 The three things that affect size in the frame

8.5 Close focusing and macrophotography

9. The Concept of Perspective

9.1 What perspective is about.

9.2 Perspective: Correlation to recognisable sizes

9.3 Perspective: Near and far relationship to subject of interest

9.4 Angle of view and perspective.

9.5 Distortion in wide angle and very narrow angle

9.6 Why the periphery in the wide angle is distorted?

.

1. Optical lines

1.1 Light and image

1.2 Optic axis - object, lens, image

1.3 Focus - point to point divergence and convergence

2. Optical diagrams

2.1 A point to point diagram

2.2 An edge to edge diagram

2.3 A field to field diagram

2.4 Field and angle of view

2.5 The nodal point or optical centre

3. Focus

3.1 Focal point

3.2 Circle of confusion

3.3 Depth of field

3.4 Hyperfocal distance

4. Lens elements & properties

4.1 Simple lens setup and focus from infinity

4.2 Focal length

4.3 Thin Lens Equation - image distance and object distance

4.4 Both o and i > f

5. Image distance and its limits

5.1 image distance increase with focal length

5.2 limited maximum image distance limits maximum focal length

5.3 the mirror distance < image distance

5.4 retrofocus design

6. Subject distance and its limits

6.1 No limits to infinity

6.2 reciprocal relationship between image and object distance at fixed focal length

6.3 minimum object distance tally with maximum image distance

6.4 minimum focus distance

6.5 minimum working distance

7. The Concept of Frame

7.1 The borders of physical vision and photography

7.2 Eventual photographic output is two dimensional within a square or rectangular frame

7.3 Image circle versus the frame

7.4 The image frame versus the viewfinder frame.

8. The Concept of Size

8.1 Physical image size

8.2 Apparent image size in frame

8.3 Distance, circumference of view and size - Why does things gets smaller when further?

8.4 The three things that affect size in the frame

8.5 Close focusing and macrophotography

9. The Concept of Perspective

9.1 What perspective is about.

9.2 Perspective: Correlation to recognisable sizes

9.3 Perspective: Near and far relationship to subject of interest

9.4 Angle of view and perspective.

9.5 Distortion in wide angle and very narrow angle

9.6 Why the periphery in the wide angle is distorted?

.

zoossh

Senior Member

1. Optical lines

1.1 Light and image

The difference in painting from photography lies in that painting relies on the painter's hand (or legs and mouths for some), whereas in photography, it is a replicate (in a sense) of what is in front of the camera. Although there can be overlapping, painting is at total will of the artist making a sun a square if he wants and drawing a monster that do not exist in any similarities, whereas photographer makes use of the natural properties of light and mould it accordingly by alteration of exposure, focus, focal length and motion etc. Photography does not set off at will like a painting, but it can be, and most of the time is, taken in a split of a second, replicating an image that is pre-fixed by the subject but altered in certain aspect.

The first part to some basics on optics is to know the role it plays in forming an image. In order for an image to be formed, light travelling from a triangle should fall on the sensor as a triangle. It can be an inverted, bulging, stretched or simply a bigger triangle, but it is still a triangle with that three points. The retention of certain spatial characteristics of that image as related to the original object is one of the key factor in identifying what is that subject - you recognise an apple being an apple and a banana as a banana.

Light emitted or reflected from that subject on the sensor bridge the transformation of the spatial relationship of that subject into an image.

However, what actually happens in real world is that a single light source such as a candle light, emits light in all direction, so what happens is that pt A in the candle light and pt B in the candle light both emits light to fall at the same time on tile C and tile D which are neighbouring tiles on the wall, so both tiles received light from pt A and pt B simultaneously, causing no difference in what is received on tile C and tile D. There will be no image. For an image to form, light from pt A should fall onto C and light from pt B should fall onto D, so that the spatial relationship between pt A and pt B can be replicated on tile C and tile D.

In order to limit the pathway of that divergence of light, camera obscura comes about via a pinhole and a dark chamber that excludes any light contamination, making sure that light from pt A will travel in a line of its own through the pinhole and falling onto tile C whereas pt B will travel in a separate path but also through the pinhole, and faling onto tile D. The sharpness of the image relies on the properties of light travelling in a straight line, and that light despite of being divergent can be made into a cone of light if it is allowed a limited pathway across a medium, via a pinhole. The smaller the pinhole, the smaller the base of the cone, the more accurate is the pt A and pt B and thus the sharper the image is. The bigger the pinhole, the bigger is the area pt A and pt B will spead over towards each other. This limits the amount of light entering as the pinhole needs to be very small, but compromising means that a bigger pinhole will give rise to a less sharp and fuzzy picture.

The concept of lens, which mimics what our eyes do, thus develops in photography after it is adapted in spectacles to help our vision. The key concept of the lens is to open up an area large enough to allow sufficient light to enter unlike a pinhole, but creates sharpness by making light from any divergent source to converge back to the same point in the image. The way light converge and diverge accordingly to the relationship of the subject, the lens and the image, thus form the basics of optics.

1.2 Optic axis - object, lens, image

From wikipedia (2008 Apr 13), it is an imaginary line that defines the path along which light propagates through the system, aligning the subject/object in the centre of the frame through the epicentre of the simple lenses and mirrors and onto the centre of the sensor.

It is in the centre of the many optical lines of light that link what is reflected or emitted off the object and its background through the lens onto the sensor. Each pixel of the sensor is supposedly to define the calibre of each line, an imaginery one that contains the pathway of many photons. The optical axis however refers to the one that is in the middle of these lines, that has rotational symmetry as a result of that.

This axis is used as the line to described in a simplified manner of the various distances in a relationship that defines focal length and its application in photography.

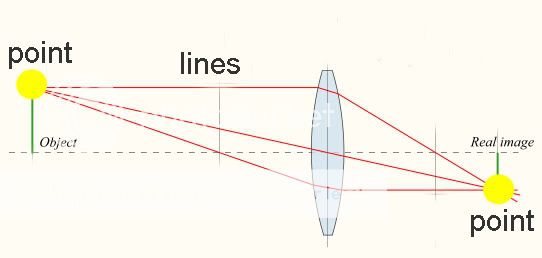

1.3 Focus - point to point divergence and convergence

As mentioned earlier, all light source spreads in all direction, unless they are hooded like a desk lamp, causing light to get reflected and shines in a general direction, and even so the light spreads over a large area.

In order for details from the subject (which mostly reflect but may also emit light) to be sharp and to form an image, these spreading out of light need to be converged. Our eyes have this ability to converge the divergent light and get it focused at one point at a certain distance behind the lens, that is the retina. Camera works on the same principle.

Various lens components have different converging abilities, and their relationship can vary to accomodate different focal length in zooms, but nonetheless the whole lens unit eventually must combined to perform a single function, that is to converge light.

This image here shows how the tip at the top of the subject ends up on the sensor. On the left is a point source, on the right is a point destination, and in the middle at the lens, is the field of that single point source. Picture from wikipedia, re-distributed under the terms of the GNU Free Documentation License as granted by Aurelio A. Heckert, creator of the above image.

As shown in the diagram, the top part of the object will diverge through many areas of the lens, but eventually converged back to a point that is equidistant from the optic axis in the opposite direction. Other points of the object will also converge to points that is equidistant from the optic axis also in the opposite direction, thereby forming a mirror image that is inverted but otherwise all the same.

.

1.1 Light and image

The difference in painting from photography lies in that painting relies on the painter's hand (or legs and mouths for some), whereas in photography, it is a replicate (in a sense) of what is in front of the camera. Although there can be overlapping, painting is at total will of the artist making a sun a square if he wants and drawing a monster that do not exist in any similarities, whereas photographer makes use of the natural properties of light and mould it accordingly by alteration of exposure, focus, focal length and motion etc. Photography does not set off at will like a painting, but it can be, and most of the time is, taken in a split of a second, replicating an image that is pre-fixed by the subject but altered in certain aspect.

The first part to some basics on optics is to know the role it plays in forming an image. In order for an image to be formed, light travelling from a triangle should fall on the sensor as a triangle. It can be an inverted, bulging, stretched or simply a bigger triangle, but it is still a triangle with that three points. The retention of certain spatial characteristics of that image as related to the original object is one of the key factor in identifying what is that subject - you recognise an apple being an apple and a banana as a banana.

Light emitted or reflected from that subject on the sensor bridge the transformation of the spatial relationship of that subject into an image.

However, what actually happens in real world is that a single light source such as a candle light, emits light in all direction, so what happens is that pt A in the candle light and pt B in the candle light both emits light to fall at the same time on tile C and tile D which are neighbouring tiles on the wall, so both tiles received light from pt A and pt B simultaneously, causing no difference in what is received on tile C and tile D. There will be no image. For an image to form, light from pt A should fall onto C and light from pt B should fall onto D, so that the spatial relationship between pt A and pt B can be replicated on tile C and tile D.

In order to limit the pathway of that divergence of light, camera obscura comes about via a pinhole and a dark chamber that excludes any light contamination, making sure that light from pt A will travel in a line of its own through the pinhole and falling onto tile C whereas pt B will travel in a separate path but also through the pinhole, and faling onto tile D. The sharpness of the image relies on the properties of light travelling in a straight line, and that light despite of being divergent can be made into a cone of light if it is allowed a limited pathway across a medium, via a pinhole. The smaller the pinhole, the smaller the base of the cone, the more accurate is the pt A and pt B and thus the sharper the image is. The bigger the pinhole, the bigger is the area pt A and pt B will spead over towards each other. This limits the amount of light entering as the pinhole needs to be very small, but compromising means that a bigger pinhole will give rise to a less sharp and fuzzy picture.

The concept of lens, which mimics what our eyes do, thus develops in photography after it is adapted in spectacles to help our vision. The key concept of the lens is to open up an area large enough to allow sufficient light to enter unlike a pinhole, but creates sharpness by making light from any divergent source to converge back to the same point in the image. The way light converge and diverge accordingly to the relationship of the subject, the lens and the image, thus form the basics of optics.

1.2 Optic axis - object, lens, image

From wikipedia (2008 Apr 13), it is an imaginary line that defines the path along which light propagates through the system, aligning the subject/object in the centre of the frame through the epicentre of the simple lenses and mirrors and onto the centre of the sensor.

It is in the centre of the many optical lines of light that link what is reflected or emitted off the object and its background through the lens onto the sensor. Each pixel of the sensor is supposedly to define the calibre of each line, an imaginery one that contains the pathway of many photons. The optical axis however refers to the one that is in the middle of these lines, that has rotational symmetry as a result of that.

This axis is used as the line to described in a simplified manner of the various distances in a relationship that defines focal length and its application in photography.

1.3 Focus - point to point divergence and convergence

As mentioned earlier, all light source spreads in all direction, unless they are hooded like a desk lamp, causing light to get reflected and shines in a general direction, and even so the light spreads over a large area.

In order for details from the subject (which mostly reflect but may also emit light) to be sharp and to form an image, these spreading out of light need to be converged. Our eyes have this ability to converge the divergent light and get it focused at one point at a certain distance behind the lens, that is the retina. Camera works on the same principle.

Various lens components have different converging abilities, and their relationship can vary to accomodate different focal length in zooms, but nonetheless the whole lens unit eventually must combined to perform a single function, that is to converge light.

This image here shows how the tip at the top of the subject ends up on the sensor. On the left is a point source, on the right is a point destination, and in the middle at the lens, is the field of that single point source. Picture from wikipedia, re-distributed under the terms of the GNU Free Documentation License as granted by Aurelio A. Heckert, creator of the above image.

As shown in the diagram, the top part of the object will diverge through many areas of the lens, but eventually converged back to a point that is equidistant from the optic axis in the opposite direction. Other points of the object will also converge to points that is equidistant from the optic axis also in the opposite direction, thereby forming a mirror image that is inverted but otherwise all the same.

.

zoossh

Senior Member

2.1 A point to point diagram

I see that drawing the optics and how it works can cause confusion. In the end, i work out that there is two way of seeing it. One way is a point to point diagram, the other is a field to field diagram.